RNN循环神经网络

什么是循环神经网络?

循环神经网络(Recurrent Neural Network, RNN)是一类专门用于处理序列数据的神经网络架构。与传统的前馈神经网络不同,RNN具有"记忆"能力,能够捕捉数据中的时间依赖关系。

核心特点:

- 循环连接:RNN单元之间存在循环连接,使得信息能够在网络内部持续传递

- 参数共享:相同的权重参数在时间步之间共享,大大减少了模型参数数量

- 序列处理:能够处理可变长度的输入序列,适用于时序数据

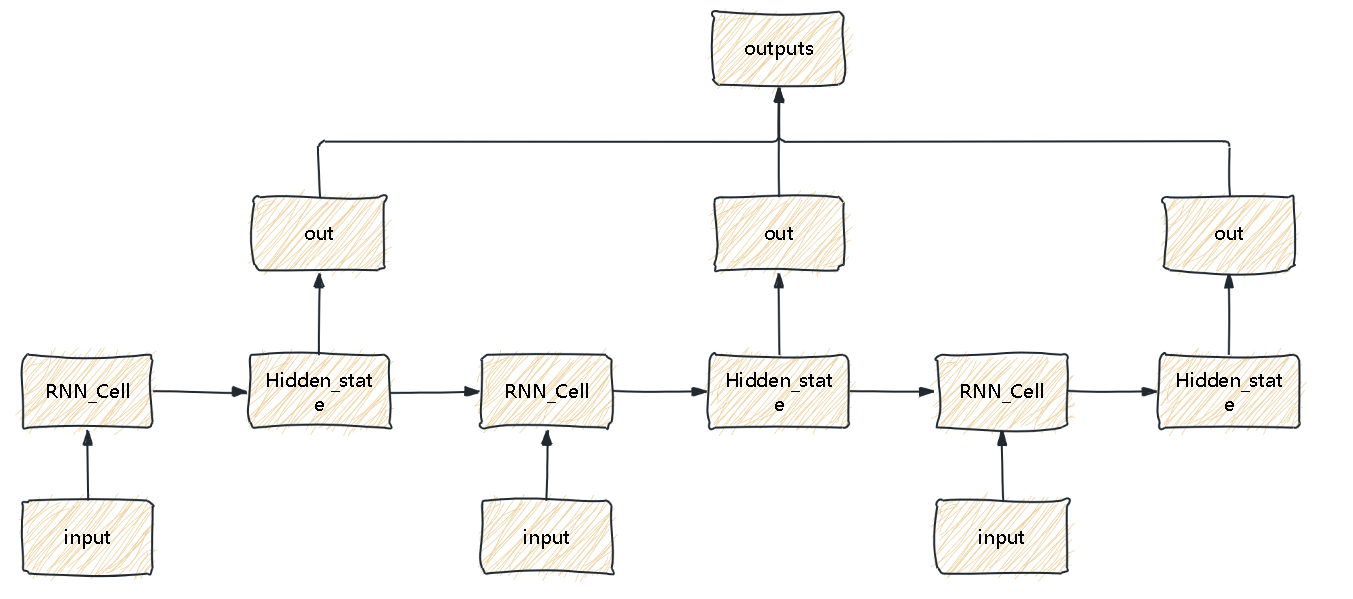

基本结构:

RNN的基本单元包含一个隐藏状态(hidden state),它在每个时间步都会被更新:

- 新隐藏状态 = f(当前输入, 前一个隐藏状态)

举一个简单的例子:

简单的循环神经网络例子(多对多)

我们来做一个简单的循环神经网络,其实也就是跟上图一致。

import torch

from torch import nnclass RNNCell(nn.Module):def __init__(self,input_size,hidden_size):super().__init__()self.input_size = input_sizeself.hidden_size = hidden_sizeself.w_hidden = torch.randn(hidden_size,hidden_size)self.w_input = torch.randn(input_size,hidden_size)self.tanh = nn.Tanh()def forward(self,x,hidden_state=None):N,input_size = x.shapeif hidden_state is None:hidden_state = torch.zeros(N,self.hidden_size)hidden_state = self.tanh(hidden_state @ self.w_hidden + x @ self.w_input)return hidden_stateclass RNN(nn.Module):def __init__(self,input_size,hidden_size):super().__init__()self.cell = RNNCell(input_size,hidden_size)self.w_output = torch.randn(hidden_size,hidden_size)def forward(self,x,hidden_state=None):N,L,input_size = x.shapeoutputs = []for i in range(L):x_i = x[:,i]hidden_state = self.cell(x_i,hidden_state)out = hidden_state @ self.w_outputoutputs.append(out)outputs = torch.stack(outputs,dim=1)return outputs,hidden_stateif __name__ == "__main__":x = torch.randn(5,3,10)model = RNN(10,20)y,h = model(x)print(y.shape)print(h.shape)

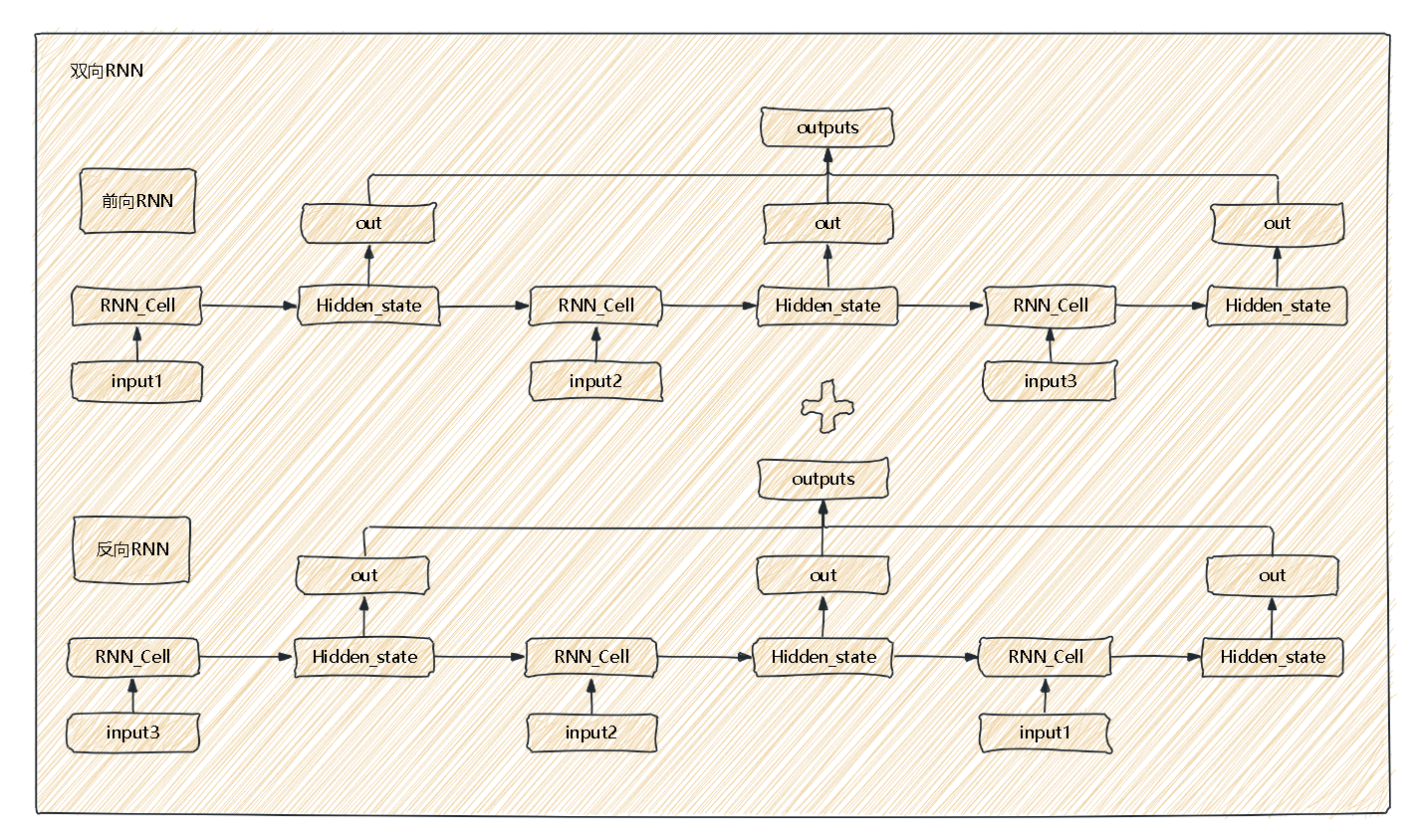

双向循环神经网络

双向RNN其实也就是两层RNN的叠加,分别更新的是两层隐藏状态以及两层输出。

import torch

from torch import nnclass BiRNN(nn.Module):def __init__(self,input_size,hidden_size):super().__init__()self.input_size = input_sizeself.hidden_size = hidden_size#前向RNN和线性层self.forward_cell = nn.RNNCell(input_size,hidden_size)self.backward_cell = nn.RNNCell(input_size,hidden_size)#反向RNN和线性层self.forward_Linear = nn.Linear(hidden_size,hidden_size)self.backward_Linear = nn.Linear(hidden_size,hidden_size)def forward(self,x,hidden = None):N,L,input_size = x.shapeif hidden is None:#堆叠两层隐藏层hidden = torch.zeros(2,N,self.hidden_size)h_forward = hidden[0]out_forward = []for i in range(L):h_forward = self.forward_cell(x[:,i],h_forward)out = self.forward_Linear(h_forward)out_forward.append(out)out_forward = torch.stack(out_forward,dim=1)x = torch.flip(x,dims=[1])h_backward = hidden[1]out_backward = []for i in range(L):h_backward = self.backward_cell(x[:,i],h_backward)out = self.backward_Linear(h_backward)out_backward.append(out)out_backward = torch.stack(out_backward,dim=1)outputs = torch.concat((out_forward,out_backward),dim=-1)hidden = torch.stack([h_forward,h_backward])return outputs,hiddenif __name__ == '__main__':x = torch.randn((5,3,10))model = BiRNN(10,20)outputs,hidden = model(x)print(outputs.shape)print(hidden.shape)

:攻克 循环控制(二),轻松拿捏!)

![daily notes[16]](http://pic.xiahunao.cn/daily notes[16])

)

:下载大文件的两种方式)

)

)